Google has unveiled TurboQuant, a novel memory compression algorithm that reduces AI model memory requirements by sixfold during inference, according to research disclosed this week. The technique addresses one of the most persistent bottlenecks in enterprise AI deployment: the substantial memory overhead required to run large language models in production environments.

The compression method, detailed in a paper released Wednesday, maintains model accuracy within 1% of uncompressed baselines whilst dramatically reducing the memory footprint needed to serve predictions. According to TechCrunch AI, Google researchers achieved the 6x compression ratio across multiple model architectures, including transformer-based systems that power most contemporary AI applications.

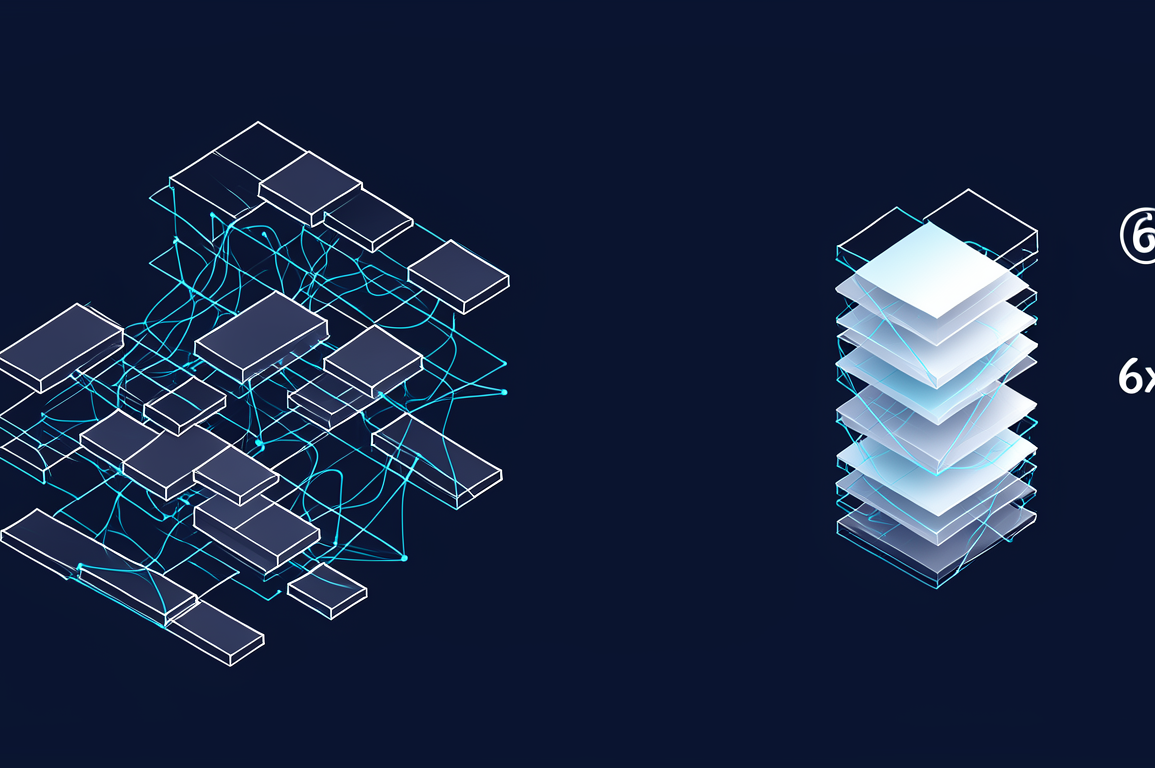

TurboQuant employs adaptive quantisation techniques that dynamically adjust precision levels based on the sensitivity of different model components. Unlike traditional uniform quantisation approaches that apply the same bit-width reduction across all parameters, the algorithm allocates higher precision to critical weights whilst aggressively compressing less sensitive portions of the network.

The technical approach builds upon recent advances in mixed-precision inference, but introduces a runtime profiling system that identifies compression opportunities specific to individual deployment contexts. This context-aware optimisation enables the algorithm to achieve compression ratios significantly beyond what static quantisation methods deliver, according to analysis from Ars Technica AI.

For enterprise AI deployments, the memory efficiency gains translate directly into cost reductions and expanded deployment options. Cloud providers currently charge premium rates for high-memory instances required to serve large models, making inference costs a substantial operational expense. A 6x reduction in memory requirements could enable organisations to serve the same workloads on significantly cheaper infrastructure or deploy larger models within existing memory budgets.

The compression breakthrough particularly benefits organisations running inference at scale, where memory constraints often force compromises between model capability and deployment economics. Financial services firms, healthcare providers, and e-commerce platforms—sectors where AI inference costs represent material operational expenses—stand to gain most from the efficiency improvements.

However, the announcement also intensifies competitive pressure on specialised AI inference providers that have built businesses around optimising model serving efficiency. Companies such as Groq and SambaNova have differentiated themselves through custom silicon and software optimisations that reduce inference costs. If hyperscalers can achieve comparable efficiency gains through algorithmic improvements alone, the value proposition for specialised inference infrastructure becomes less compelling.

The compression technique also has implications for edge deployment scenarios, where memory constraints are even more severe than in cloud environments. Mobile devices, IoT sensors, and embedded systems could run substantially more capable models if memory requirements decrease by the reported magnitude. This could accelerate AI adoption in resource-constrained environments where current models remain impractical.

Google has not disclosed whether TurboQuant will be made available as open-source software or remain proprietary technology integrated into Google Cloud services. The company’s approach to distribution will significantly influence how quickly the broader AI industry can capitalise on the efficiency gains. Previous compression techniques from major research labs have followed both paths, with some released openly and others retained as competitive advantages.

The research arrives as the AI industry confronts growing concerns about the computational and financial sustainability of increasingly large models. Training costs for frontier systems now reach tens of millions of dollars, whilst inference costs for high-volume applications can exceed training expenses over a model’s operational lifetime. Techniques that reduce serving costs without sacrificing capability address a critical economic constraint on AI deployment.

Industry observers will be watching for independent validation of the compression ratios and accuracy preservation claims, as well as details about which model architectures and use cases benefit most from the technique. The practical impact will depend on how readily the algorithm integrates into existing inference pipelines and whether it introduces latency penalties that offset the memory efficiency gains.

Google’s memory compression breakthrough represents a meaningful advance in AI inference efficiency, with direct implications for deployment economics across enterprise and edge environments. The technique’s ultimate significance will depend on its accessibility to the broader AI community and its performance characteristics in production workloads beyond controlled research settings.