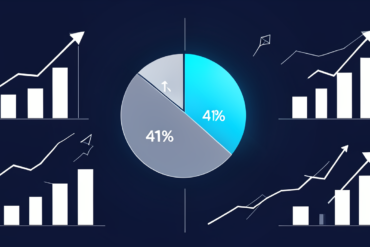

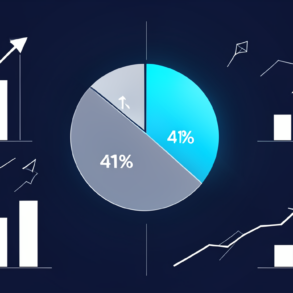

A widening gap between artificial intelligence adoption and consumer trust threatens to reshape enterprise AI strategy, according to new polling data from Quinnipiac University that reveals Americans are simultaneously embracing and doubting the technology at unprecedented rates.

The survey, reported by TechCrunch AI, captures a paradox now confronting every organisation deploying AI systems: usage climbs whilst confidence erodes, creating a trust deficit that no amount of technical sophistication can resolve through engineering alone.

This divergence marks a critical inflection point for the AI industry. Enterprises have spent the past 18 months racing to integrate large language models and generative AI into customer-facing applications, banking on sustained adoption to justify billion-dollar infrastructure investments. The Quinnipiac findings suggest that adoption momentum may mask a more troubling reality—users engage with AI tools out of necessity or convenience, not conviction.

The transparency gap identified in the polling data points to a fundamental misalignment between vendor priorities and user expectations. Whilst AI companies have focused on capability demonstrations—longer context windows, multimodal processing, faster inference—consumers increasingly demand explanations of how systems reach conclusions, what data informs outputs, and where accountability resides when tools err.

For enterprise software vendors, the implications are immediate and material. Trust erosion creates opening for competitors emphasising explainability and audit trails over raw performance metrics. Organisations such as Anthropic and others positioning constitutional AI and interpretability as differentiators stand to gain market share amongst risk-averse enterprise buyers, particularly in regulated sectors where unexplainable outputs carry compliance risk.

The polling data also intensifies pressure on hyperscalers—Amazon Web Services, Microsoft Azure, and Google Cloud—whose AI service revenue depends on enterprise customers maintaining confidence in the underlying models. These platforms now face demands for enhanced logging, decision provenance tracking, and bias detection capabilities that add cost and complexity to already expensive infrastructure.

Financial services firms and healthcare providers, already navigating stringent regulatory frameworks, confront the most acute challenges. The trust gap validates concerns raised by compliance officers who have resisted aggressive AI deployment timelines, and strengthens the hand of internal stakeholders advocating for human-in-the-loop architectures over full automation.

The phenomenon mirrors historical technology adoption patterns where initial enthusiasm gives way to scepticism as limitations become apparent. The dot-com era saw similar disconnects between usage and trust, as did early social media adoption before privacy concerns emerged. What distinguishes the current moment is the compressed timeline—AI has moved from novelty to ubiquity in under two years, leaving little time for trust-building institutional safeguards to develop.

Regulatory momentum will likely accelerate in response to these findings. Policymakers in Brussels, Washington, and London have already drafted AI governance frameworks; polling evidence of public distrust provides political cover for more aggressive intervention. The European Union’s AI Act implementation, scheduled for phased enforcement through 2025 and 2026, may serve as template for jurisdictions previously taking lighter-touch approaches.

The business model implications extend beyond compliance costs. Marketing organisations built on AI-generated content face credibility questions. Customer service operations replacing human agents with chatbots must weigh efficiency gains against satisfaction scores. Product teams integrating AI features confront the reality that capabilities users don’t trust generate limited value regardless of technical merit.

Vendors that move decisively to address transparency concerns—through comprehensive model cards, accessible explanations of training data, and clear delineation of capability boundaries—position themselves advantageously as trust becomes a competitive differentiator rather than baseline expectation.

The trajectory of enterprise AI investment over the next 12 to 18 months will reveal whether vendors treat trust as a technical problem amenable to engineering solutions, or as a social contract requiring fundamental changes to development practices, deployment models, and accountability structures. The Quinnipiac data suggests the latter approach is no longer optional.