A persistent shortage of dynamic random-access memory threatens to constrain artificial intelligence infrastructure expansion through at least 2027, with manufacturers expected to satisfy only 60% of projected demand, according to industry analysis reported by The Verge AI. The supply gap represents a fundamental bottleneck for AI compute scaling at a moment when enterprise adoption is accelerating rapidly.

The shortage stems from the industry’s structural inability to expand high-bandwidth memory (HBM) production capacity quickly enough to match demand from AI accelerators. Unlike previous semiconductor shortages driven by demand spikes or supply chain disruptions, this constraint reflects a multi-year capital investment cycle required to build new fabrication capacity—a timeline that cannot be compressed regardless of market incentives.

The business implications cascade through the AI infrastructure stack. Cloud providers face constrained ability to expand GPU clusters, potentially leading to allocation rationing similar to the compute shortages experienced in 2023. Enterprises planning on-premises AI deployments may encounter extended lead times and premium pricing for server configurations. Startups dependent on readily available compute capacity could find themselves at a competitive disadvantage against established players with reserved capacity.

The constraint particularly affects AI training workloads, which require substantial memory bandwidth to feed data to processors efficiently. Inference workloads—serving trained models to end users—prove less memory-intensive but still face pressure as organisations scale deployment. The 60% supply coverage figure suggests that nearly half of planned AI infrastructure expansion could face delays or require architectural compromises.

Memory manufacturers stand to benefit from sustained pricing power, a reversal from the cyclical oversupply conditions that have historically plagued the DRAM industry. Samsung, SK Hynix, and Micron—which collectively control the HBM market—have announced capacity expansion plans, but new fabrication facilities require 18 to 24 months from groundbreaking to production. This timeline pushes meaningful supply relief into 2026 at the earliest, with full market balance unlikely before 2027.

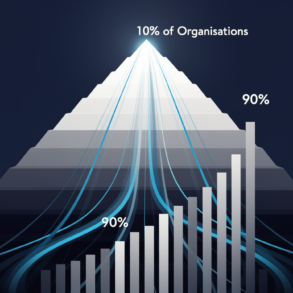

The shortage creates asymmetric advantages for large technology companies with existing supplier relationships and forward purchase agreements. Hyperscale cloud providers have reportedly secured multi-year supply commitments, insulating their infrastructure roadmaps whilst smaller competitors face spot market volatility. This dynamic could accelerate industry consolidation as organisations lacking guaranteed memory supply struggle to compete.

Alternative architectures may gain traction as organisations seek to circumvent memory constraints. Processing-in-memory designs, which reduce data movement between processor and memory, represent one technical response. More efficient model architectures that reduce memory footprint during training offer another path. However, these approaches require significant engineering investment and may not suit all workloads.

The constraint also raises questions about AI capability timelines. Scaling laws that have driven recent progress assume consistent availability of compute resources. If memory shortages limit the construction of larger training clusters, the pace of capability improvement could decelerate, at least for approaches dependent on massive scale.

Market observers should monitor several indicators: HBM pricing trends, which will signal whether supply is loosening; cloud provider capacity allocation policies, which may shift toward longer-term commitments; and announcements of alternative memory technologies reaching production readiness. Quarterly earnings from memory manufacturers will provide visibility into capacity expansion timelines and customer demand patterns.

The memory shortage represents a sobering reminder that AI infrastructure depends on physical manufacturing capacity that cannot scale at software speed. Organisations building AI strategies must now account for hardware constraints that may persist for years, fundamentally altering planning assumptions about compute availability and cost.